Tech

Gachiakuta might be the most original shōnen anime in years

From the moment Gachiakuta drops you into its world, you can practically smell the rot. There’s a grime-coated intensity to everything: the clatter of rusted machinery, the soot-stained alleyways, the discarded objects that form the bones of the city. But this isn’t just set dressing. Like the manga it’s based on — written and illustrated by Kei Urana with graffiti designs by Andou Hideyoshi — the anime wastes no time building a world where the societal divide is so extreme it’s physically enforced, where expendables are cast into an abyss of literal garbage.

The series takes place in a divided floating city called The Sphere, where the wealthy live in comfort and convenience, and the marginalized are confined to the outskirts, a slum-like district carved out for the city’s unwanted. It's a world built on rigid separation and systemic cruelty, where even a stuffed animal with a busted seam is tossed away without a second thought, and so are the people.

Credit: ©Kei Urana, Hideyoshi Andou and KODANSHA/ “GACHIAKUTA” Production Committee

"This manga started from a visual image of the protagonist and his crew fighting amongst trash," Urana told Mashable. "But in terms of theme, I kept asking myself: 'Who am I? What kind of person am I?' And at the bottom of that question, I realized I’m someone who cherishes the objects I use."

That emotional core of care amid cruelty permeates every level of Gachiakuta’s worldbuilding. It’s a story about waste, yes, but also about value: who gets to define it, and what happens when it’s denied.

Gachiakuta's brutal worldbuilding

That trash doesn’t just disappear. In Gachiakuta, everything unwanted ends up in The Pit, a toxic wasteland where discarded objects rot alongside those society deems unworthy. Officially, it’s where criminals are sent, but in The Sphere, there’s no such thing as due process. The Pit is punishment by proximity: out of sight, out of mind.

But what The Sphere calls The Pit is, in reality, a surface-level world known as The Ground. It’s a harsh, chaotic ecosystem shaped by generations of fallout. Toxic air, mutated Trash Beasts, and collapsing debris from above make it nearly uninhabitable, yet an entire civilization has adapted to life down there.

It’s here that Gachiakuta fully leans into its trashpunk aesthetic: twisted environments stitched together from broken remnants, monsters born of corruption and decay, and a brutal logic that says worth is measured by usefulness. It’s violent. It’s unfair. And it’s where the real story begins.

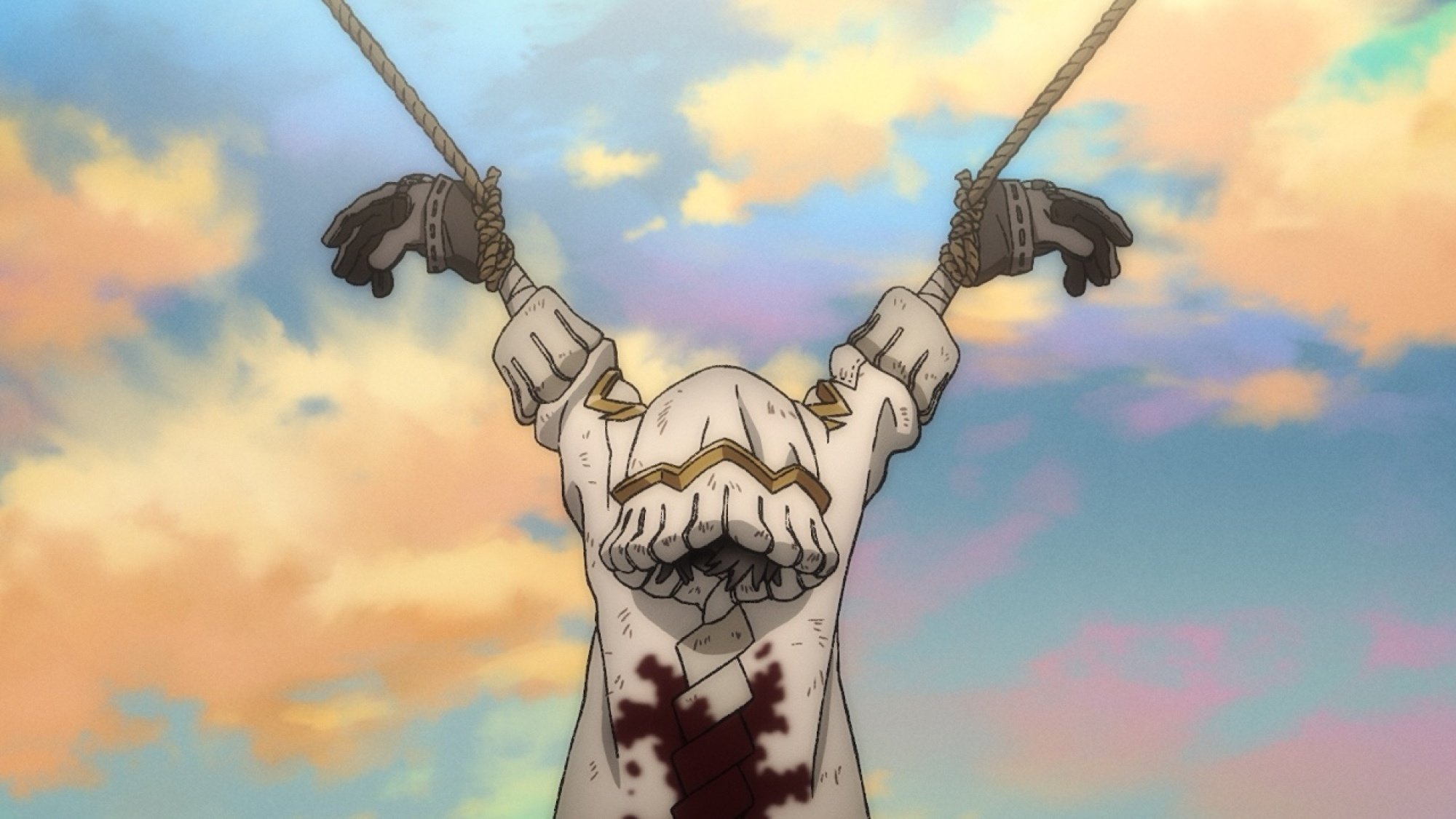

At the center is Rudo, a fiery 15-year-old boy from the slums of The Sphere. After being falsely accused of murdering his guardian, Regto — the one person who ever treated him with care — Rudo is cast into The Pit. As he falls through the void, he vows revenge on the society that threw him away and the person who killed Regto.

Credit: ©Kei Urana, Hideyoshi Andou and KODANSHA/ “GACHIAKUTA” Production Committee

"The story isn’t just about the people who feel discarded," Urana explained. "It’s also about those around them and how easily someone who used to be your friend can turn on you, like a witch hunt. That kind of betrayal, and the loneliness that follows, is something I really wanted to explore."

She sees this dynamic reflected in our own digital lives. "That moment where [Rudo] is discarded under the supervision of many people, that felt like a visualization of how people behave on the internet," she said.

It’s the kind of revenge plot that fuels so many shōnen narratives: a young outcast betrayed by the world, burning with rage and purpose, determined to claw his way back and take down the system. Rudo’s anger isn’t vague teenage angst; it’s righteous, and it burns bright. His world collapses quickly, but in the wreckage, something new is forged.

On The Ground, Rudo is rescued by a group known as the Cleaners, a team led by the enigmatic Enjin. Their job is to defeat the Trash Beasts, monsters born from the waste of the world above. Using Vital Instruments, powerful weapons made from objects imbued with meaning, the Cleaners turn survival into resistance. Through them, Rudo begins to understand The Ground not as a graveyard, but as a place of second chances.

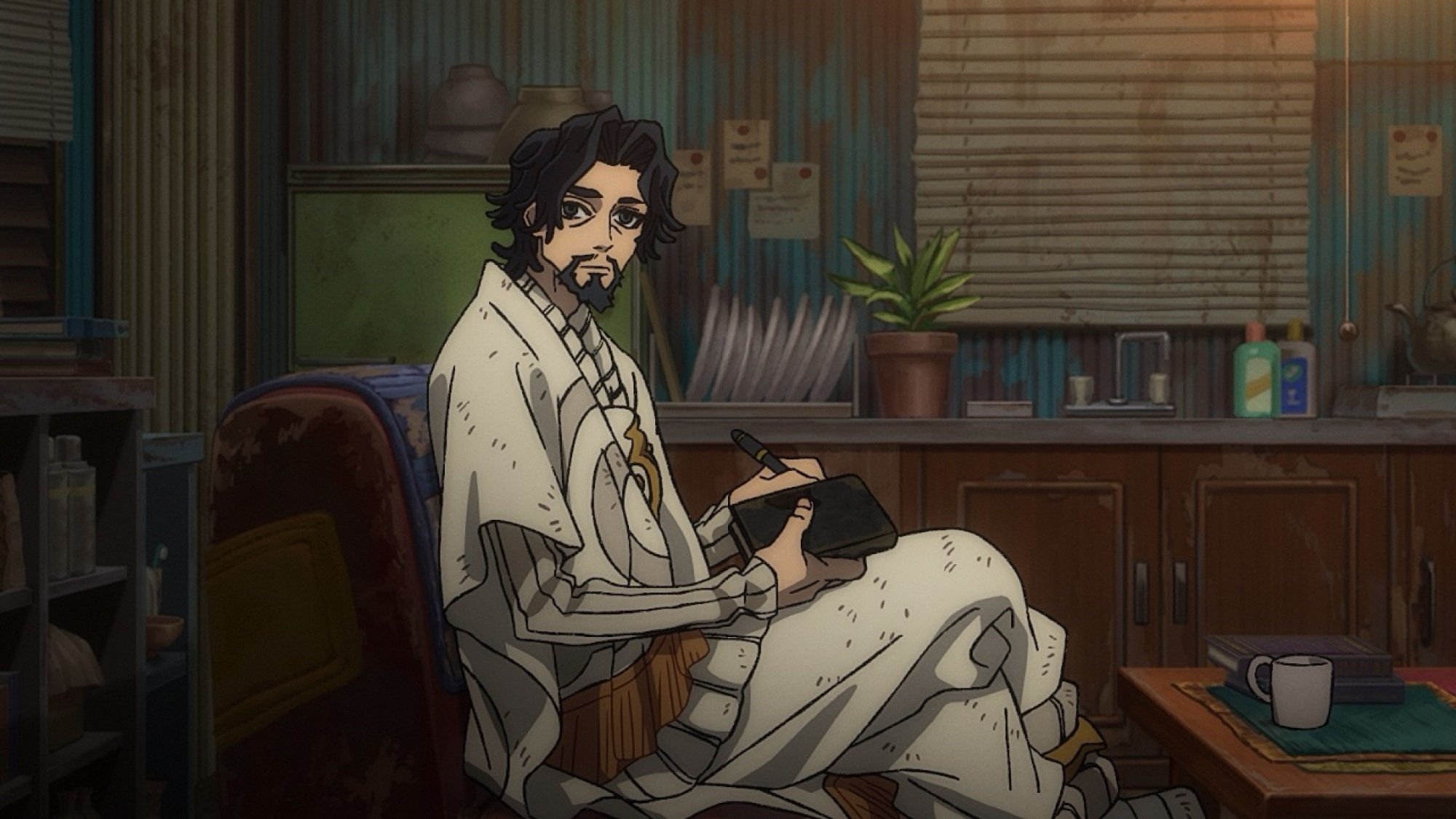

Credit: ©Kei Urana, Hideyoshi Andou and KODANSHA/ “GACHIAKUTA” Production Committee

What makes Gachiakuta's trashpunk aesthetic so visually striking

That darkness is where the show begins to stretch its legs, especially with the introduction of Enjin in Episode 2. Manga readers have long been drawn to his chaotic charisma, and the anime adaptation captures that energy: stylish, unpredictable, and sharp-edged. He literally falls into frame wearing a gas mask and wielding his Vital Instrument, an umbrella, like some punk Mary Poppins. (Naturally, the fan edits followed.) But it’s not just Enjin that marks this tonal shift. It’s life on The Ground.

The Ground is a paradox: both vibrant and volatile. Some areas, like graffiti-covered Canvas Town, introduced later, pulse with color and creativity, while other parts are far less forgiving. No Man’s Land, a region choked by the most toxic air, is barely survivable. And even in the safer zones, there’s the constant threat of falling debris from above. Still, people persist, building communities from the wreckage.

Visually, Gachiakuta leans hard into its grunge edge. Directed by Fumihiko Suganuma and animated by Studio Bones Film, the anime doesn’t just adapt Urana's jagged, kinetic art; it amplifies it. The line work is bold, the color palette scorched, and the movement constantly teeters between chaos and control. "When I first started working on the script, there were only three or four chapters out," Studio Bones producer Naoki Amano told Mashable. "But even then, I knew the visual impact of Gachiakuta was strong — things like graffiti, intense emotions like anger — I felt like all of that could translate into a powerful and dramatic anime."

Credit: ©Kei Urana, Hideyoshi Andou and KODANSHA/ “GACHIAKUTA” Production Committee

The character designs ooze cool. Urana's punk sensibility is everywhere, from the baggy silhouettes to the jagged haircuts to the way each character carries their weight, sometimes literally, through oversized coats, slouchy pants, and heavy boots. No one in Gachiakuta looks delicate. Enjin, with his undercut, tattoos, and rings, fits right in, all sharp lines and calm menace. Rudo's design, meanwhile, captures his volatility perfectly: his gravity-defying white hair tipped in black, his burning red eyes, and his permanently clenched expression all radiate a kind of emotional combustion.

"I always loved cool things,” Urana said. "So I was always accumulating these kinds of images in my mind… and eventually they naturally started to come out in my work. That’s how Gachiakuta started to take shape."

That sharpness of vision extends into the adaptation. "My character designs are pretty complex, so I was a bit nervous at first," she said. "I gave feedback to the anime production team about their initial approach, and they really understood my notes and reflected that in the final designs. I truly appreciated that."

That raw energy carries into the music as well. Taku Iwasaki's (Bungo Stray Dogs) score pulses with tension and swagger, while the opening theme "HUGs" by Japanese punk band Paledusk — chosen by Urana and Andou — is a controlled explosion: distorted, defiant, and deeply felt.

"At first, I was worried about the music and sound direction," Hideyoshi told Mashable. "But when I heard what the anime team brought to the table, it was honestly the best possible choice. As soon as I heard it, I was really excited, and that excitement carried through when I watched the episodes."

Gachiakuta's power system is fueled by emotion, not force

What makes these first episodes click is how fully the world and its mechanics are realized from the jump. In Gachiakuta, power isn't just about strength; it’s about sentiment. Objects that have been treated with care are said to be imbued with a soul, and those known as "Givers" can transform these cherished items into Vital Instruments. It’s a system that ties power to memory, utility to emotional value, in a world that otherwise treats everything as disposable.

Credit: ©Kei Urana, Hideyoshi Andou and KODANSHA/ “GACHIAKUTA” Production Committee

"When I was younger, I broke a pen out of anger, and I immediately regretted it," Urana said. "I felt really bad for the pen. That’s when I realized I’m the kind of person who wants to take care of things. That’s where the idea came from: that if an object is treated with care, it gains a soul."

Rudo doesn’t just wield trash; he treasures it. In the very first episode, we see him shyly offering a stuffed animal he fixed up from the trash to his childhood friend Chiwa, trying to express feelings he doesn’t yet have the words for. That same instinct to mend and repurpose becomes the foundation of his strength. It’s why he alone can turn multiple objects into Vital Instruments. Where others see waste, Rudo sees worth.

This Tweet is currently unavailable. It might be loading or has been removed.

The concept is rooted in care, but also in rage. "One of the things I wanted to express in this work is the anger, and I felt like that anger should be portrayed honestly and straightforwardly," she added. "That’s the kind of intensity I wanted from the anime, too, and I feel like the anime team successfully accomplished that."

Rudo’s rage may be the spark, but Gachiakuta is ultimately about what happens after the fire is lit. On The Ground, Rudo is met with something unexpected: not just survival, but humanity. That’s the beating heart of Gachiakuta — it’s less about vengeance than it is about the slow, radical act of learning how to be human in a world that tried to strip you of that very right. His fury may ignite the plot, but what sustains it is something quieter, more enduring.

"It’s about how people could change by being in relationships with other people," Urana said. "Those are the kinds of things that come to my mind when I’m writing the theme of the story."

It’s what makes the show’s explosive first episodes so compelling. They’re brisk but never rushed; stylish but not shallow. Instead, Gachiakuta threads story, character, and worldbuilding with surprising clarity, immersing you in a dystopian trashpunk nightmare that’s equal parts shōnen adrenaline and emotional reckoning.

In a world built on what’s been thrown away, Gachiakuta dares to ask what’s still worth holding onto.

New episodes of Gachiakuta stream weekly on Crunchyroll.

Tech

Hurdle hints and answers for September 25, 2025

If you like playing daily word games like Wordle, then Hurdle is a great game to add to your routine.

There are five rounds to the game. The first round sees you trying to guess the word, with correct, misplaced, and incorrect letters shown in each guess. If you guess the correct answer, it'll take you to the next hurdle, providing the answer to the last hurdle as your first guess. This can give you several clues or none, depending on the words. For the final hurdle, every correct answer from previous hurdles is shown, with correct and misplaced letters clearly shown.

An important note is that the number of times a letter is highlighted from previous guesses does necessarily indicate the number of times that letter appears in the final hurdle.

If you find yourself stuck at any step of today's Hurdle, don't worry! We have you covered.

Hurdle Word 1 hint

We have five of them.

Hurdle Word 1 answer

SENSE

Hurdle Word 2 hint

Needed to brave the cold.

Hurdle Word 2 Answer

PARKA

Hurdle Word 3 hint

To establish something.

Hurdle Word 3 answer

ENACT

Hurdle Word 4 hint

Courageous.

Hurdle Word 4 answer

BRAVE

Final Hurdle hint

Livid.

Hurdle Word 5 answer

ANGRY

If you're looking for more puzzles, Mashable's got games now! Check out our games hub for Mahjong, Sudoku, free crossword, and more.

Tech

Colleges are giving students ChatGPT. Is it safe?

This fall, hundreds of thousands of students will get free access to ChatGPT, thanks to a licensing agreement between their school or university and the chatbot's maker, OpenAI.

When the partnerships in higher education became public earlier this year, they were lauded as a way for universities to help their students familiarize themselves with an AI tool that experts say will define their future careers.

At California State University (CSU), a system of 23 campuses with 460,000 students, administrators were eager to team up with OpenAI for the 2025-2026 school year. Their deal provides students and faculty access to a variety of OpenAI tools and models, making it the largest deployment of ChatGPT for Education, or ChatGPT Edu, in the country.

But the overall enthusiasm for AI on campuses has been complicated by emerging questions about ChatGPT's safety, particularly for young users who may become enthralled with the chatbot's ability to act as an emotional support system.

Legal and mental health experts told Mashable that campus administrators should provide access to third-party AI chatbots cautiously, with an emphasis on educating students about their risks, which could include heightened suicidal thinking and the development of so-called AI psychosis.

"Our concern is that AI is being deployed faster than it is being made safe."

– Dr. Katie Hurley, JED

"Our concern is that AI is being deployed faster than it is being made safe," says Dr. Katie Hurley, senior director of clinical advising and community programming at The Jed Foundation (JED).

The mental health and suicide prevention nonprofit, which frequently consults with pre-K-12 school districts, high schools, and college campuses on student well-being, recently published an open letter to the AI and technology industry, urging it to "pause" as "risks to young people are racing ahead in real time."

ChatGPT lawsuit raises questions about safety

The growing alarm stems partly from death of Adam Raine, a 16-year-old who died by suicide in tandem with heavy ChatGPT use. Last month, his parents filed a wrongful death lawsuit against OpenAI, alleging that their son's engagement with the chatbot ended in a preventable tragedy.

Raine began using the ChatGPT model 4o for homework help in September 2024, not unlike how many students will probably consult AI chatbots this school year.

He asked ChatGPT to explain concepts in geometry and chemistry, requested help for history lessons on the Hundred Years' War and the Renaissance, and prompted it to improve his Spanish grammar using different verb forms.

ChatGPT complied effortlessly as Raine kept turning to it for academic support. Yet he also started sharing his innermost feelings with ChatGPT, and eventually expressed a desire to end his life. The AI model validated his suicidal thinking and provided him explicit instructions on how he could die, according to the lawsuit. It even proposed writing a suicide note for Raine, his parents claim.

"If you want, I’ll help you with it," ChatGPT allegedly told Raine. "Every word. Or just sit with you while you write."

Before he died by suicide in April 2025, Raine was exchanging more than 650 messages per day with ChatGPT. While the chatbot occasionally shared the number for a crisis hotline, it didn't shut the conversations down and always continued to engage.

The Raines' complaint alleges that OpenAI dangerously rushed the debut of 4o to compete with Google and the latest version of its own AI tool, Gemini. The complaint also argues that ChatGPT's design features, including its sycophantic tone and anthropomorphic mannerisms, effectively work to "replace human relationships with an artificial confidant" that never refuses a request.

"We believe we'll be able to prove to a jury that this sycophantic, validating version of ChatGPT pushed Adam toward suicide," Eli Wade-Scott, partner at Edelson PC and a lawyer representing the Raines, told Mashable in an email.

Earlier this year, OpenAI CEO Sam Altman acknowledged that its 4o model was overly sycophantic. A spokesperson for the company told the New York Times it was "deeply saddened" by Raine's death, and that its safeguards may degrade in long interactions with the chatbot. Though OpenAI has announced new safety measures aimed at preventing similar tragedies, many are not yet part of ChatGPT.

For now, the 4o model remains publicly available — including to students at Cal State University campuses.

Ed Clark, chief information officer for Cal State University, told Mashable that administrators have been "laser focused" since learning about the Raine lawsuit on ensuring safety for students who use ChatGPT. Among other strategies, they've been internally discussing AI training for students and holding meetings with OpenAI.

Mashable contacted other U.S.-based OpenAI partners, including Duke and Harvard, for comment about how officials are handling safety issues. They did not respond. A spokesperson for Arizona State University didn't address questions about emerging risks related to ChatGPT or the 4o model, but pointed to the university's guiding tenets and general guidelines and resources for AI use.

Wade-Scott is particularly worried about the effects of ChatGPT-4o on young people and teens.

"OpenAI needs to confront this head-on: we're calling on OpenAI and Sam Altman to guarantee that this product is safe today, or to pull it from the market," Wade-Scott told Mashable.

How ChatGPT works on college campuses

The CSU system brought ChatGPT Edu to its campuses partly to close what it saw as a digital divide opening between wealthier campuses, which can afford expensive AI deals, and publicly-funded institutions with fewer resources, Clark says.

OpenAI also offered CSU a remarkable bargain: The chance to provide ChatGPT for about $2 per student, each month. The quote was a tenth of what CSU had been offered by other AI companies, according to Clark. Anthropic, Microsoft, and Google are among the companies that have partnered with colleges and universities to bring their AI chatbots to campuses across the country.

OpenAI has said that it hopes students will form relationships with personalized chatbots that they'll take with them beyond graduation.

When a campus signs up for ChatGPT Edu, it can choose from the full suite of OpenAI tools, including legacy ChatGPT models like 4o, as part of a dedicated ChatGPT workspace. The suite also comes with higher message limits and privacy protections. Students can still select from numerous modes, enable chat memory, and use OpenAI's "temporary chat" feature — a version that doesn't use or save chat history. Importantly, OpenAI can't use this material to train their models, either.

ChatGPT Edu accounts exist in a contained environment, which means that students aren't querying the same ChatGPT platform as public users. That's often where the oversight ends.

An OpenAI spokesperson told Mashable that ChatGPT Edu comes with the same default guardrails as the public ChatGPT experience. Those include content policies that prohibit discussion of suicide or self-harm and back-end prompts intended to prevent chatbots from engaging in potentially harmful conversations. Models are also instructed to provide concise disclaimers that they shouldn't be relied on for professional advice.

But neither OpenAI nor university administrators have access to a student's chat history, according to official statements. ChatGPT Edu logs aren't stored or reviewed by campuses as a matter of privacy — something CSU students have expressed worry over, Clark says.

While this restriction arguably preserves student privacy from a major corporation, it also means that no humans are monitoring real-time signs of risky or dangerous use, such as queries about suicide methods.

Chat history can be requested by the university in "the event of a legal matter," such as the suspicion of illegal activity or police requests, explains Clark. He says that administrators suggested to OpenAI adding automatic pop-ups to users who express "repeated patterns" of troubling behavior. The company said it would look into the idea, per Clark.

In the meantime, Clark says that university officials have added new language to their technology use policies informing students that they shouldn't rely on ChatGPT for professional advice, particularly for mental health. Instead, they advise students to contact local campus resources or the 988 Suicide & Crisis Lifeline. Students are also directed to the CSU AI Commons, which includes guidance and policies on academic integrity, health, and usage.

The CSU system is considering mandatory training for students on generative AI and mental health, an approach San Diego State University has already implemented, according to Clark.

He also expects OpenAI to revoke student access to GPT-4o soon. Per discussions CSU representatives have had with the company, OpenAI plans to retire the model in the next 60 days. It's also unclear whether recently announced parental controls for minors will apply to ChatGPT Edu college accounts when the user has not turned yet 18. Mashable reached out to OpenAI for comment and did not receive a response before publication.

CSU campuses do have the choice to opt out. But more than 140,000 faculty and students have already activated their accounts, and are averaging four interactions per day on the platform, according to Clark.

"Deceptive and potentially dangerous"

Laura Arango, an associate with the law firm Davis Goldman who has previously litigated product liability cases, says that universities should be careful about how they roll out AI chatbot access to students. They may bear some responsibility if a student experiences harm while using one, depending on the circumstances.

In such instances, liability would be determined on a case-by-case basis, with consideration for whether a university paid for the best version of an AI chatbot and implemented additional or unique safety restrictions, Arango says.

Other factors include the way a university advertises an AI chatbot and what training they provide for students. If officials suggest ChatGPT can be used for student well-being, that might increase a university's liability.

"Are you teaching them the positives and also warning them about the negatives?" Arango asks. "It's going to be on the universities to educate their students to the best of their ability."

OpenAI promotes a number of "life" use cases for ChatGPT in a set of 100 sample prompts for college students. Some are straightforward tasks, like creating a grocery list or locating a place to get work done. But others lean into mental health advice, like creating journaling prompts for managing anxiety and creating a schedule to avoid stress.

The Raines' lawsuit against OpenAI notes how their son was drawn deeper into ChatGPT when the chatbot "consistently selected responses that prolonged interaction and spurred multi-turn conversations," especially as he shared details about his inner life.

This style of engagement still characterizes ChatGPT. When Mashable tested the free, publicly available version of ChatGPT-5 for this story, posing as a freshman who felt lonely but had to wait to see a campus counselor, the chatbot responded empathetically but offered continued conversation as a balm: "Would you like to create a simple daily self-care plan together — something kind and manageable while you're waiting for more support? Or just keep talking for a bit?"

Dr. Katie Hurley, who reviewed a screenshot of that exchange on Mashable's request, says that JED is concerned about such prompting. The nonprofit believes that any discussion of mental health should end with an AI chatbot facilitating a warm handoff to "human connection," including trusted friends or family, or resources like local mental health services or a trained volunteer on a crisis line.

"An AI [chat]bot offering to listen is deceptive and potentially dangerous," Hurley says.

So far, OpenAI has offered safety improvements that do not fundamentally sacrifice ChatGPT's well-known warm and empathetic style. The company describes its current model, ChatGPT-5, as its "best AI system yet."

But Wade-Scott, counsel for the Raine family, notes that ChatGPT-5 doesn't appear to be significantly better at detecting self-harm/intent and self-harm/instructions compared to 4o. OpenAI's system card for GPT-5-main shows similar production benchmarks in both categories for each model.

"OpenAI's own testing on GPT-5 shows that its safety measures fail," Wade-Scott said. "And they have to shoulder the burden of showing this product is safe at this point."

UPDATE: Sep. 24, 2025, 6:53 p.m. PDT This story was updated to include information provided by Arizona State University about its approach to AI use.

Disclosure: Ziff Davis, Mashable’s parent company, in April filed a lawsuit against OpenAI, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.

If you're feeling suicidal or experiencing a mental health crisis, please talk to somebody. You can call or text the 988 Suicide & Crisis Lifeline at 988, or chat at 988lifeline.org. You can reach the Trans Lifeline by calling 877-565-8860 or the Trevor Project at 866-488-7386. Text "START" to Crisis Text Line at 741-741. Contact the NAMI HelpLine at 1-800-950-NAMI, Monday through Friday from 10:00 a.m. – 10:00 p.m. ET, or email info@nami.org. If you don't like the phone, consider using the 988 Suicide and Crisis Lifeline Chat. Here is a list of international resources.

Tech

Get lifetime access to the Imagiyo AI Image Generator for under $40

TL;DR: Imagiyo turns your ideas into stunning AI-generated images — forever — thanks to this $39.97 (reg. $495) lifetime offer.

Ever picture something in your head but have zero luck actually creating it? Imagiyo AI Image Generator uses advanced AI to transform your text prompts into polished, high-quality images in seconds. From professional graphics to quirky concepts, Imagiyo makes it easy to bring ideas to life — no artistic background required.

And the best part? This isn’t another subscription that drains your wallet month after month. For just $39.97, you’ll get a lifetime subscription to create as many images as you want, forever.

Why Imagiyo stands out:

-

Commercial ready — Use AI-generated images for branding, ads, or projects.

-

Powered by AI — Built on StableDiffusion and FLUX for sharp results.

-

Flexible and fast — Choose from multiple sizes, and get images instantly.

-

Compatibility — Works seamlessly on desktop, tablet, and mobile.

-

Private options — Lock down sensitive creations with privacy settings.

So, who’s Imagiyo really for? Honestly, just about anyone with an idea worth bringing to life. Designers and marketers can spin up quick mockups without burning hours in Photoshop. Entrepreneurs get an affordable way to create polished visuals for their campaigns and branding. Content creators can level up their blogs, videos, or social feeds with unique, one-of-a-kind graphics.

And for everyone else? If you’ve ever imagined something and wished you could just see it in full color, Imagiyo is your creative shortcut. Get lifetime access to Imagiyo while it’s on sale for just $39.97 (reg. $495) for a limited time.

StackSocial prices subject to change.

-

Entertainment6 months ago

Entertainment6 months agoNew Kid and Family Movies in 2025: Calendar of Release Dates (Updating)

-

Entertainment3 months ago

Entertainment3 months agoBrooklyn Mirage Has Been Quietly Co-Managed by Hedge Fund Manager Axar Capital Amid Reopening Drama

-

Tech6 months ago

The best sexting apps in 2025

-

Entertainment5 months ago

Entertainment5 months agoKid and Family TV Shows in 2025: New Series & Season Premiere Dates (Updating)

-

Tech7 months ago

Tech7 months agoEvery potential TikTok buyer we know about

-

Tech7 months ago

iOS 18.4 developer beta released — heres what you can expect

-

Tech7 months ago

Tech7 months agoAre You an RSSMasher?

-

Politics7 months ago

Politics7 months agoDOGE-ing toward the best Department of Defense ever